Your AI Agent Needs DEPLOY, Not Just a Prompt

A prompt is not enough to build a useful AI agent. Learn the DEPLOY framework for defining mission, identity, resources, guardrails, connections, and earned autonomy.

Most people are trying to build AI agents with better prompts.

That is understandable.

Prompts are the visible part. They are easy to tweak. They give you immediate feedback. Change a few words and the output changes.

But a prompt is not an agent strategy.

A prompt can produce an answer.

An agent has to carry responsibility.

That is a much bigger design problem.

If you want an AI system to do useful work inside a business, you cannot stop at “act like a helpful assistant” or “write this in my tone.”

You need to answer a different set of questions.

What does this agent own?

Who does it serve?

What knowledge can it trust?

What tools can it use?

What is outside its authority?

Who reviews its work?

When should it stop and escalate?

What would make you trust it with more responsibility?

That is where most AI efforts break.

Not because the model is useless.

Because the agent was never actually deployed.

It was prompted.

There is a difference.

A useful agent needs more than instructions

Think about how you would bring a new person onto your team.

You would not just hand them a sticky note that says, “Be helpful.”

You would explain the role.

You would tell them what success looks like.

You would give them access to the right files, systems, and context.

You would explain what they can decide on their own and what needs approval.

You would show them who they work with.

You would check their work until trust is earned.

AI agents need the same kind of leadership.

Not because they are people.

Because they are being placed into human systems that already depend on trust, context, authority, and judgment.

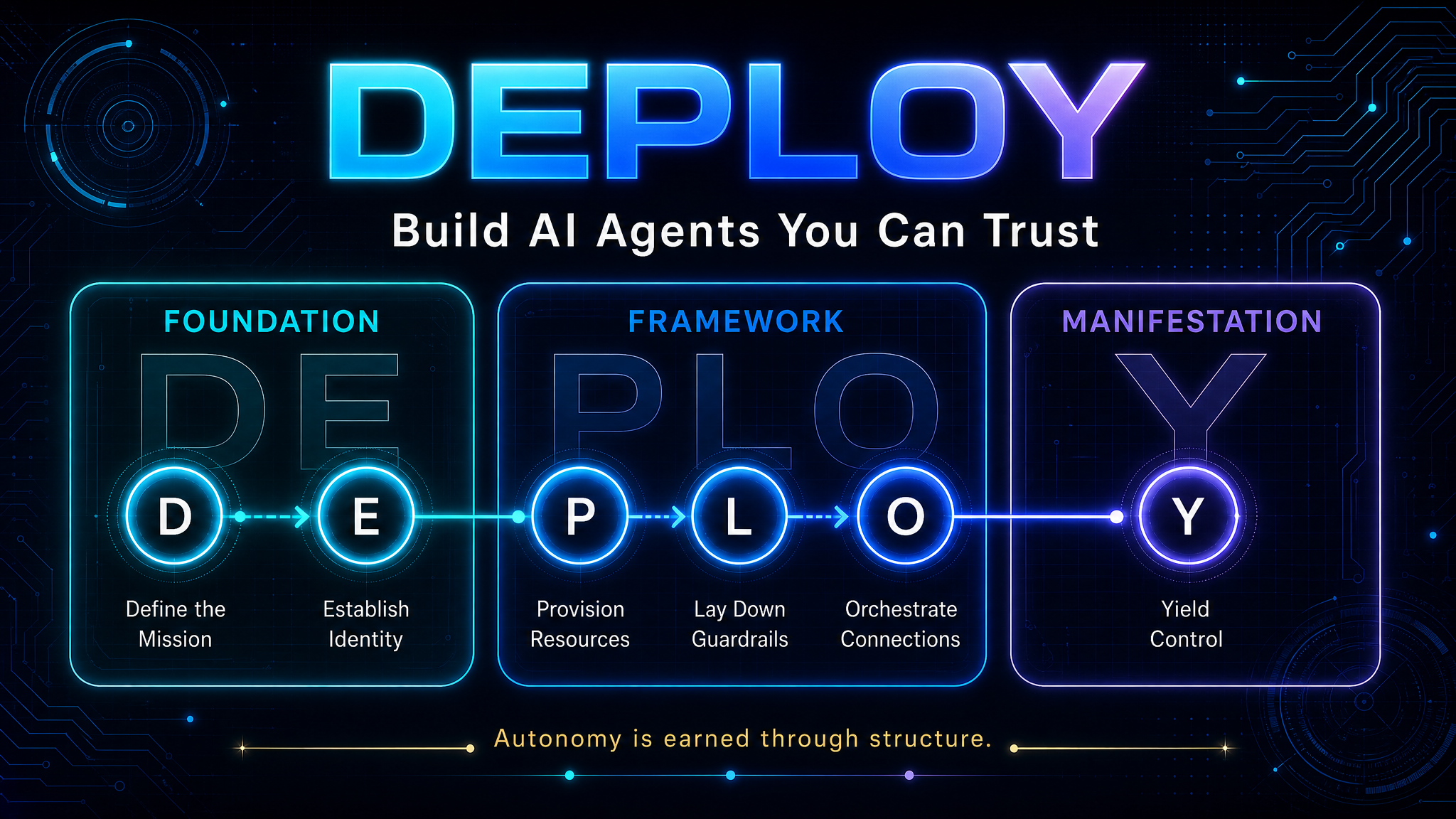

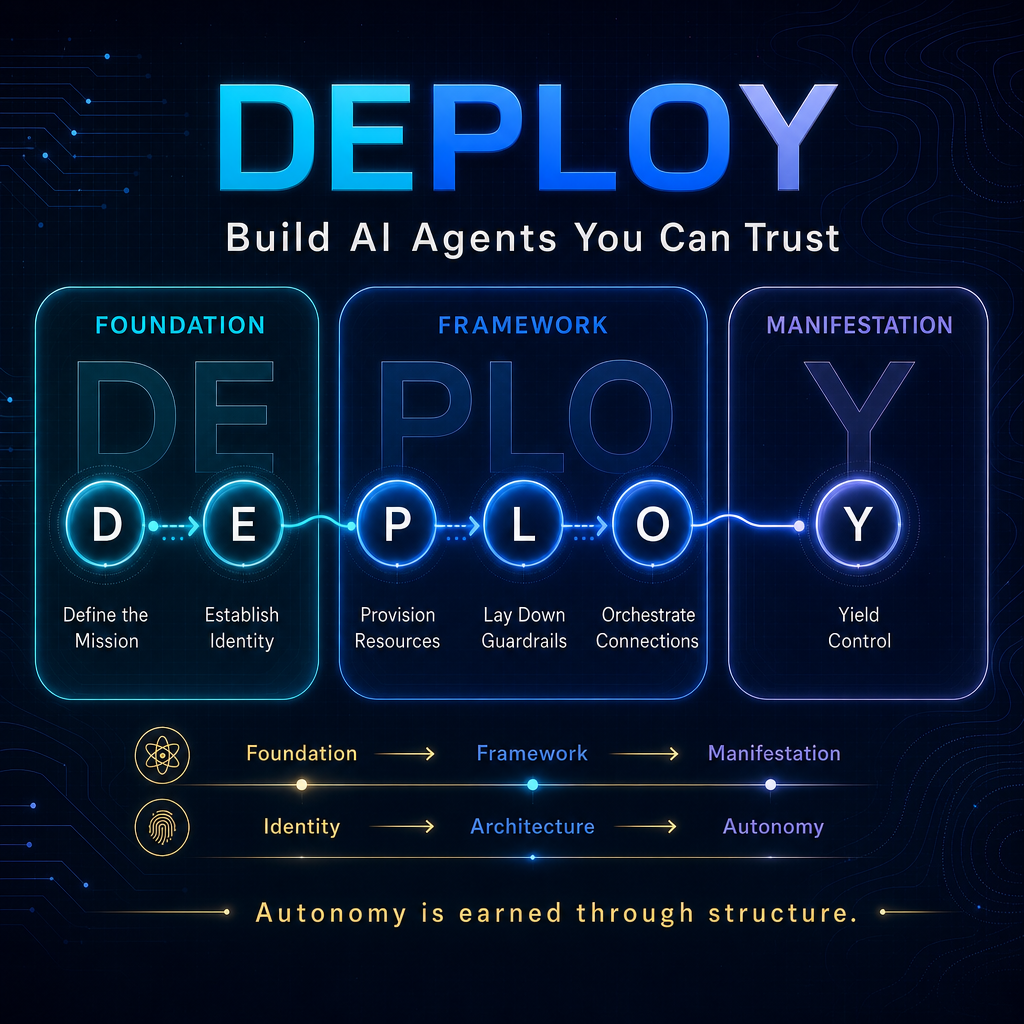

This is why I use the DEPLOY framework.

DEPLOY is a simple way to move from random AI usage to intentional agent design.

It has six steps:

- Define the Mission

- Establish Identity

- Provision Resources

- Lay Down Guardrails

- Orchestrate Connections

- Yield Control

That sequence matters.

You do not start with autonomy.

You earn autonomy through structure.

D — Define the Mission

The first mistake is treating an agent like a task machine.

“Write this email.”

“Summarize this document.”

“Make a social post.”

Those are tasks.

A mission is different.

A mission defines responsibility.

Instead of “write posts,” a content agent might be responsible for preparing weekly draft content from approved source material so the human leader can review faster and publish more consistently.

That is a role.

It has a purpose.

It has a customer.

It has a standard.

A good mission should answer four questions:

- What is this agent responsible for?

- Who does it serve?

- What does success look like?

- What is explicitly not its job?

That last question matters.

Without boundaries, the agent drifts.

And when agents drift, leaders lose trust.

E — Establish Identity

Identity is not cute branding.

It is operational clarity.

An agent with no identity becomes generic. It tries to satisfy every request the same way. It has no stable perspective, no clear voice, no expertise profile, and no priority order.

Identity gives the system a consistent way to operate.

Name the agent.

Define its expertise.

Define its tone.

Define its authority level.

Define what it protects.

For example, a community agent should not sound like a legal reviewer, a hype marketer, or a founder pretending to have unlimited time.

It should be helpful, clear, grounded, and trust-preserving.

That identity shapes the work.

It tells the agent how to decide when there is ambiguity.

The better the identity, the less you have to micromanage the output.

P — Provision Resources

A lot of AI systems fail because they are asked to perform with no reliable context.

The agent is expected to produce good work while guessing from memory, hallucinating around missing details, or relying on whatever someone pasted into a chat window.

That is not deployment.

That is improvisation.

Resources usually fall into four categories:

- Knowledge: approved facts, brand voice, strategy, policies, examples

- Tools: the systems it can use to do the work

- Workspace: where drafts, notes, logs, and outputs should live

- Connections: people, agents, or workflows it needs to coordinate with

Start lean.

Do not give the agent every tool on day one.

Give it the minimum viable toolkit needed to do the job safely.

Then expand access as trust is earned.

That is how you onboard people.

It is also how you deploy agents.

L — Lay Down Guardrails

Guardrails are not there to make the agent weak.

Guardrails are what make trust possible.

If you do not define boundaries, every useful agent becomes a risk surface.

Three guardrail sentences can change everything:

- You can always...

- You must ask before...

- You should stop if...

For example:

You can always draft internal summaries from approved materials.

You must ask before sending anything external.

You should stop if the request involves legal claims, money movement, personal health, private data, or reputation risk.

That is not bureaucracy.

That is leadership.

The stronger the guardrails, the easier it becomes to give the agent more room over time.

O — Orchestrate Connections

A solo agent can be useful.

A connected agent can become transformational.

Connections answer three questions:

- What comes in?

- What goes out?

- Who or what depends on the result?

A research agent might receive a topic, gather sources, produce a brief, and hand it to a writing agent.

A writing agent might create a draft, flag claims that need review, and hand it to a human approver.

A metrics agent might watch results and feed lessons back into the next content cycle.

That is where AI stops being a novelty and starts becoming a system.

But the handoffs have to be designed.

Otherwise the output dies in a chat thread, a folder, or a dashboard nobody checks.

Y — Yield Control

Yield Control is the hardest step.

It is also the step everyone wants to jump to.

People hear “AI agents” and immediately imagine full autonomy.

But autonomy is not the starting point.

Autonomy is the result of good design.

You yield control only after the mission is clear, the identity is established, the resources are available, the guardrails are in place, and the connections are working.

Then you let the agent run inside a defined lane.

You set a review cadence.

You judge outcomes, not activity.

You improve the system instead of constantly interrupting the work.

That is the shift from Drifter to Commander.

The Drifter keeps poking the tool, changing prompts, and reacting to every output.

The Commander designs the system, lets it operate, reviews the results, and improves the architecture.

DEPLOY creates earned autonomy

The goal of DEPLOY is not to make AI sound impressive.

The goal is to make AI useful enough to trust.

That requires more than a prompt.

It requires mission.

Identity.

Resources.

Guardrails.

Connections.

Earned autonomy.

That is how you build an agent that can do real work without becoming reckless.

And once you can deploy one agent well, the next challenge appears.

How do multiple agents, humans, workflows, knowledge systems, permissions, and review loops work together?

That is the operating model question.

DEPLOY builds the agent.

COMMAND builds the system around it.

We will go there next.

For now, start with one agent.

Give it a mission.

Give it an identity.

Give it the resources it needs.

Give it guardrails.

Connect it to the workflow.

Then, carefully, yield control.

That is how you move from prompting AI to deploying leverage.

AI for your business.

Humanity for your life.

That is the point.

If you want a clearer path from AI experiments to real business leverage, download the AI Ascension Guide.

If you are building this in real time and want support, join the free IMPACT AI Command Center.

If your business is ready to design its first real AI agent deployment, book a call.

Dan Gentry

TEDx Speaker · AI Strategist · Founder, Third Power Performance

Ready to Reclaim Your Time?

Whether you need a keynote that transforms how your team thinks about AI, or a fractional Chief AI Officer to lead the change — let's talk.